Algorithm: RVO2

We selected Reciprocal Velocity Obstacles (RVO2). Our implementation utilizes the Python-RVO2 library, a Python binding of the standard RVO2 library, based on the foundational work "Reciprocal Velocity Obstacles for Real-Time Multi-Agent Navigation" (van den Berg et al., ICRA 2008).

Why Velocity Space?

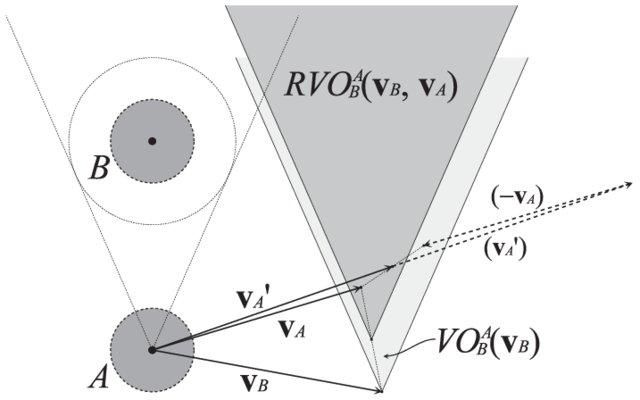

Unlike traditional pathfinding that plans in position space, RVO2 operates in velocity space. For each nearby agent, it constructs a "Velocity Obstacle"—a cone of velocities that would lead to collision within time horizon \( \tau \). The algorithm then selects the velocity closest to the preferred (goal-directed) velocity that lies outside all forbidden cones.

The Oscillation Problem

The original Velocity Obstacle (VO) approach treats other agents as passively moving obstacles. When two agents both apply VO independently, they each dodge fully—but this makes their original velocities suddenly safe again. Both agents revert, detect collision risk again, dodge again... creating oscillatory "dances."

RVO solves this by having each agent take responsibility for only 50% of the avoidance effort. Mathematically, the RVO cone's apex is translated to the midpoint \( \frac{v_A + v_B}{2} \), ensuring that when both agents follow RVO, their combined adjustments produce a collision-free trajectory without oscillation.

- Collision-Free: If both agents choose velocities outside each other's RVO on the same side, the resulting velocities are guaranteed collision-free.

- Same-Side Selection: If each agent picks the velocity closest to its current velocity outside the RVO, both automatically choose the same side to pass.

- Oscillation-Free: After choosing new velocities, the old (preferred) velocity remains inside the new RVO, preventing the reversion that causes oscillations.

Algorithm Selection Rationale

RVO2 was chosen because it provides provable collision-free guarantees with linear time complexity per agent, making it suitable for real-time multi-robot coordination.

Limitations & Critique

- Perfect Sensing Assumption: The theoretical guarantees require accurate relative position and velocity data. Our Neato's encoder drift violates this assumption, which is why we pivoted to open-loop playback.

- Holonomic Assumption: RVO2 assumes agents can instantly move in any direction \( (v_x, v_y) \). Differential-drive robots cannot; we implemented a P-controller to convert holonomic velocities to \( (v, \omega) \) commands, introducing tracking error.

- No Global Path Planning: RVO2 is purely local/reactive. Agents can get stuck in U-shaped obstacles or deadlocks. From our research, other systems typically pair RVO2 with a global roadmap planner.

- Static Environment Assumption: While RVO2 handles moving obstacles, it assumes the environment geometry is known. Dynamic obstacles (humans walking through) require sensor integration we didn't implement.

Key Parameters

def convert_to_twist(self, vx, vy, yaw):

# RVO gives global (vx, vy). We need (v, w).

desired_heading = math.atan2(vy, vx)

error = self.normalize(desired_heading - yaw)

cmd = Twist()

cmd.linear.x = math.sqrt(vx**2 + vy**2)

# P-Controller to steer into the vector

cmd.angular.z = 2.0 * error

return cmd- Preferred Velocity: Each robot computes a velocity vector pointing toward its goal at maximum speed

- Velocity Obstacle Construction: For each nearby robot, compute the cone of velocities that would cause collision within the time horizon

- Reciprocal Sharing: Each robot takes half the avoidance responsibility, shifting the cone boundary inward

- Safe Velocity Selection: Solve a linear program to find the velocity closest to preferred that avoids all cones

- Twist Conversion: Transform the global-frame velocity to robot-frame (linear.x, angular.z) commands

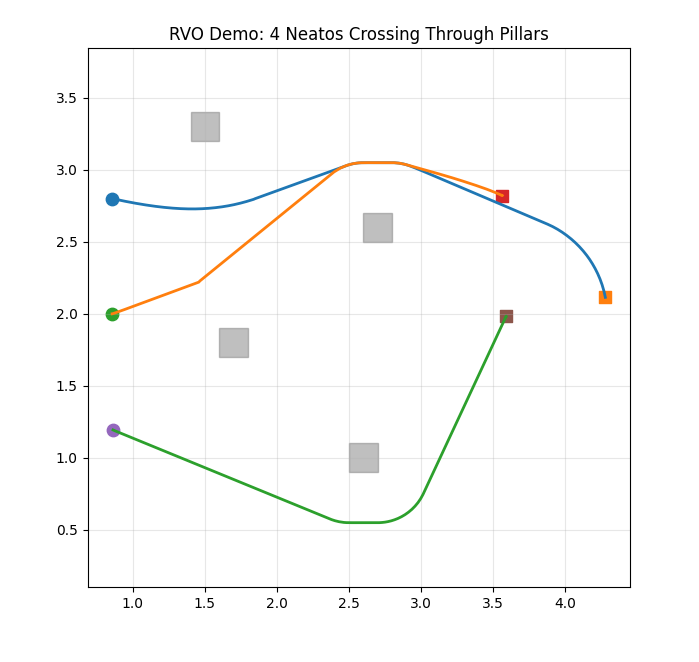

Static Obstacle Handling

RVO2 supports static obstacles as polygons. We model each obstacle as a square and pass corner vertices to RVO2. The algorithm treats these as infinite-mass agents, ensuring robots maintain clearance from walls and pillars.

Time Horizon Parameter

The \( \tau \) parameter controls how far ahead agents look for collisions. Larger values yield smoother avoidance but require earlier deviation from preferred velocities. We tuned this to balance responsiveness with stability.